This is a space for us to post and discuss common pitfalls, problems and stories about Agile methodologies implementation and adoption. Yes we also will write about solutions, recommendations and more...

Showing posts with label en_us. Show all posts

Showing posts with label en_us. Show all posts

Sunday, October 29, 2017

Sunday, June 18, 2017

Requirements, Acceptance Criteria, and Scenarios

What they are?, How they differ?, How they are related?

Context

We are building a very basic calculator, one that only supports the four basic operations: Addition, Subtraction, Multiplication, and Division.

Requirements

(from the SWEBOKv3 @ http://www4.ncsu.edu/~What they are?

- At its most basic, a software requirement is a property that must be exhibited by something in order to solve some problem in the real world.

What they do?

- Express the needs and constraints placed on a software product that contribute to the solution of some real-world problem.

Properties:

- An essential property of all software requirements is that they be verifiable as an individual feature as a functional requirement or at the system level as a nonfunctional requirement.

- Priority rating

- Uniquely identified

Types (Categories) of Requirements

- Product: A product requirement is a need or constraint on the software to be developed (for example, “The software shall verify that a student meets all prerequisites before he or she registers for a course”).

- Process: A process requirement is essentially a constraint on the development of the software (for example, “The software shall be developed using a RUP process”).

- Functional: Functional requirements describe the functions that the software is to execute; for example, formatting some text or modulating a signal. They are sometimes known as capabilities or features. A functional requirement can also be described as one for which a finite set of test steps can be written to validate its behavior.

- Nonfunctional: nonfunctional requirements are the ones that act to constrain the solution. Nonfunctional requirements are sometimes known as constraints or quality requirements.

Examples

We're going to map each of the basic arithmetic operations to a single requirement, so we'll have:

- Req-1: The calculator must support the Addition operation.

- Req-2: The calculator must support the Subtraction operation.

- Req-3: The calculator must support the Multiplication operation.

- Req-4: The calculator must support the Division operation.

Acceptance Criteria

Set of statements indicating how to judge if a given [software] component or system satisfies certain requirement. Each element, criterion, is an specific statement.

Examples

We, as math experts, know that these operations have certain "properties". We can think of these properties as rules or statements that further define some aspect of the requirement (arithmetic operation). So, now we'll take the Addition as the subject of our examples. We'll have one Criterion for each of the Addition Operation's properties.

- Cri-1-1: The calculator must comply with the Commutative property for the Addition operation.

- Cri-1-2: The calculator must comply with the Associative property for the Addition operation.

- Cri-1-3: The calculator must comply with the Identity property for the Addition operation.

- Cri-1-4: The calculator must comply with the Distributive property, respect to the Multiplication, for the Addition operation.

Scenarios

Set of concrete examples used to validate a single Acceptance Criteria.

Examples

Now we'll take the Commutative property for the Addition operation, as the subject of our examples of Scenarios. What we need are concrete Addition expressions that validate the "presence", or "proper implementation" of the Commutative property (Acceptance Criterion) for the Addition operation (Requirement).

- Sce-1-1-1: 4 + 2 = 2 + 4

- Sce-1-1-2: 10 + 5 = 5 + 10

- Sce-1-1-3: 1 + 2 + 3 = 3 + 2 + 1

A picture is worth a thousand words

Here is a breakdown structure of these three concepts: |

Requirements, Acceptance Criteria, and Scenarios breakdown |

Summary

What they are? see above definitions.How they differ?

Requirements are high level descriptions of system characteristics. Acceptance Criteria are elements used to comply with the "verifiable" property of requirements. Criteria must state how to determine if the software system had properly implemented the requirement.

Often, acceptance criteria are not detailed enough to be useful in practice, so we need concrete examples of Input-Output, Input-Response Time, etc.; those are the Scenarios.

How are related?

From the above descriptions, you can infer that they are three levels of details of the same thing: what we expect the system to do.

But, what's the deal with all this?

- All actors of the SDLC process must understand what are, how they differ, and why are all three levels required.

- Requirements / User Stories, without Criteria and Scenarios will be to subjective to be verifiable.

- Criteria and Scenarios alone, will be too difficult to manage, without a grouping element (parent requirement).

The usage of all three levels bring a lot of benefits, such as better effort estimation of Stories, Criteria, and Tasks. They also bring some challenges about how to express, document and/or automate those validations. We can review all this in another article, here I just wanted to point out their difference and give some simple examples of each one.

Labels:

acceptance criteria,

en_us,

requirements,

scenarios

Wednesday, May 31, 2017

Business Value vs Effort

After a quick poll ( https://plus.google.com/+LorenzoSolano/posts/U6ZSU5t22vA ), asking about the relation between Story Points and Business Value, I want talk about this misconception.

In simple words, the delivered business value after an iteration / sprint is the increase on the business’s capacity to generate more money or to avoid certain losses (financial, reputational, etc.)

The main concepts here are: perceived, and a [specific] development team. What this means is that the effort is subjective measurement, and that the “subject” is one software team in the entire universe. You cannot compare one team to another in terms of how much Story Points (effort) they assign to a given feature to be built.

Where they differ is in their seniority (experience) level. So, we have a Beginner’s Team and a Senior’s Team. If you ask the beginners about the effort required to build Feature X, they will respond with an estimate much larger than the seniors.

For the point of view of the business owner, the value added to the organization by the Feature X (after construction), is the same. They just want the new functionality, no matter how hard or easy is for the builders to do it.

In a, less abstract, example; think of the amount of business value added by the Feature X, as a litter of fresh water in the desert. Imagine that your family lives one mile away from the water well,

and that you need to get that litter of water to cook the day’s meals. That’s your business value, you just need the water no matter how you get it.

Now comes the effort: if you must go by foot and bring the water to your house the effort required will be high.

But if you use a camel to go and get the water, the task becomes a little easy.

Quick Definitions (context: Agile, Scrum, Software Development)

[Delivered] Business Value

Increase on certain aspect of an organization, due to a change on some software product. That aspect could be a revenue stream, risk mitigation, etc.In simple words, the delivered business value after an iteration / sprint is the increase on the business’s capacity to generate more money or to avoid certain losses (financial, reputational, etc.)

Story Points

The perceived amount of effort, for a development team, required to build certain feature into a software system.The main concepts here are: perceived, and a [specific] development team. What this means is that the effort is subjective measurement, and that the “subject” is one software team in the entire universe. You cannot compare one team to another in terms of how much Story Points (effort) they assign to a given feature to be built.

Story Points and Business Value are Unrelated

Scenario

Image two software development teams given the task estimate how much effort (not hours / time, not money), they as a team, will require to build the Feature X. Both teams have the exact same context (imaginary of course): they belong to the same organization, respond to the same stakeholders, work with the same technology stack, and with the same tools.Where they differ is in their seniority (experience) level. So, we have a Beginner’s Team and a Senior’s Team. If you ask the beginners about the effort required to build Feature X, they will respond with an estimate much larger than the seniors.

For the point of view of the business owner, the value added to the organization by the Feature X (after construction), is the same. They just want the new functionality, no matter how hard or easy is for the builders to do it.

In a, less abstract, example; think of the amount of business value added by the Feature X, as a litter of fresh water in the desert. Imagine that your family lives one mile away from the water well,

|

| ( water well: "business value" holder ) Bayuda Desert Well by Clemens Schmillen CC. https://commons.wikimedia.org/wiki/File:BayudaDesertWell.jpg |

and that you need to get that litter of water to cook the day’s meals. That’s your business value, you just need the water no matter how you get it.

Now comes the effort: if you must go by foot and bring the water to your house the effort required will be high.

|

| ( effort required by the "Beginner's Team" ) Desert walk https://www.flickr.com/photos/maartmeester/5130198174 by Maarten van Maanen @ Flickr.com |

But if you use a camel to go and get the water, the task becomes a little easy.

|

| ( effort required by less Expert's Team ) Camel at desert https://pixabay.com/en/camel-desert-sand-mongolia-692648/ by hbieser @ pixabay.com |

Conclusions

From this, inadequate, comparison I want to point out some key takeaways:- Do not compare teams per their average velocity (Story Points per Sprint)

- Do not assume that one team is delivering more business value because they have a higher “velocity” that another

- A team’s velocity is only for their usage

- You can compare past to actual velocity, analyze trends, and respond to abrupt changes on velocity (up or down) as an indicator that something is happening: new members, rotation, infrastructure / technology stack changes, etc. Obviously, the comparison will be between measurements of the SAME TEAM, past and present.

Labels:

agile estimates,

agile misconception,

business value,

effort,

en_us,

esfuerzo,

estimaciones agiles,

ideas falsas sobre agil,

puntos de historia,

story points,

valor de negocio

Sunday, June 12, 2016

Professionalism or Class Suicide?

At SoftTopia each software product, from the smallest utility to the large enterprise application, has a Quality Label. That label indicates the product’s quality for the given release, both internally and externally. The external measure indicates how close to the original functional requirements is the final product. The internal measure indicates how easy is to keep the product alive over time, keeping it within the time and cost boundaries set by all stakeholders (maintainers, customers, owners, etc.).

Let’s suppose that this “label” is an obligation at SoftTopia, so, no software product can be released without that information.

• How will a government institution handle the fact of finding out that almost all of its suppliers deliver software with a quality level below the norm?

• What will do the private sector when their customers realize that a good share of the operational costs is due to poor quality?

• How will the universities and education institutions sell their software engineering syllabus after realizing that their graduates are not able to build software according to the new norm?

What do you think? …

Let’s suppose that this “label” is an obligation at SoftTopia, so, no software product can be released without that information.

Case A

SoftTopia had implemented that norm since the creation of the first software package, as they already had a quality oriented culture when they were hit by the technological revolution.Case B (ours)

The computer program was created in the 1840s, at SoftTopia. 175 years later, and with millions of software products in use, the SoftTopia leaders released the new quality norm. This is a free commerce society so they only demand the quality label to be placed on each product, but the customer is the one that decides which product to buy. Even though, some industries are regulated because of their impact on the society, like the health sector, hydrocarbons, government, foods, public transportation, civil aviation and defense.What happens next?

• What will the software professionals and businesses not able to create products according to the new norm do?• How will a government institution handle the fact of finding out that almost all of its suppliers deliver software with a quality level below the norm?

• What will do the private sector when their customers realize that a good share of the operational costs is due to poor quality?

• How will the universities and education institutions sell their software engineering syllabus after realizing that their graduates are not able to build software according to the new norm?

Let’s now break the SoftTopia tale

If, little by little, the software professionals and companies from the industry start to publish, voluntarily, the quality level of their products: Will that be Professionalism or Suicide?What do you think? …

Sunday, June 5, 2016

Top Secret: Source Code or Underwear

Questions

Why after going to a coding dojo developers are reluctant to share their code in public (GitHub or similar)?Is our coding style so secret that we can not share it?

It will be embarrassing if someone look at your unfinished and full of mistakes practice material?

Are we only allowed to produce "perfect" code?

Is your code as your underwear?

I don't know the answers to any of these questions, but if you do please comment about: What blocks you from showing your, practice, code in a public repository?

Sunday, May 29, 2016

Another reason for Pair Programming

Some weeks ago we ( the development team) were paying some technical debt, before the coding session we did some planning. While in the short planning meeting I told to my team mates that we'll be doing our refactoring in pairs (pair programming).

I assigned the pairs and established two rounds, during each round a pair need to complete only one task per developer. Then pairs must switch. At the end of the second round if any developer had pending work he (yes all boys, boring), must complete it alone. That way during the day everyone get to work with two partners.

After my 2 to 3 minutes explanation someone asked:

- Why don we need to do pair programming?

- Because I want to force some knowledge transfer level, I said

That question got stuck in my head the hole day, first because for me the answer was so obvious and also because there were so many other reasons like:

I assigned the pairs and established two rounds, during each round a pair need to complete only one task per developer. Then pairs must switch. At the end of the second round if any developer had pending work he (yes all boys, boring), must complete it alone. That way during the day everyone get to work with two partners.

After my 2 to 3 minutes explanation someone asked:

- Why don we need to do pair programming?

- Because I want to force some knowledge transfer level, I said

That question got stuck in my head the hole day, first because for me the answer was so obvious and also because there were so many other reasons like:

- To learn IDE tricks

- Double checking of (complex, and error prone) refactoring

- Bounding: the sense that we are fixing "our" work, not just dealing with private garbage let by me

- Etc.

Results & Pros

- We manage to reduce the issues reported by our code quality tool by 17%

- In the process we learned new IDE tricks from peers, or discovered by laziness (trying to change more code in less time)

- We improve HTML files by removing unnecessary attributes or deprecated elements (by HTML5 spec)

- We discuss why the, cherry-picked, list of violations to fix were important and how they could affect us in the future

- By the end of the session we all agree to set apart one day of each sprint to paid old technical debt

- Now everyone is more vigilant, during code reviews, for newly introduced violations to coding standards

Cons

- Most of the time it was tedious manual labor

- Around 3 pm everyone was exhausted

- Merge conflicts: because I distributed "violations" by type, not by, physical, file or class there was a high frequency of conflicts

Retrospective

Maybe it is better to assign work by file, or groups of files. That way the conflicts will only occur when public interfaces get touch.

Also, a per class / file cleaning session is not so boring because you get to handle different kinds of violations.

Labels:

agile,

en_us,

pair programming,

reasons,

team

Sunday, May 22, 2016

Canned Software

It’s the day you go shopping (groceries), you go to your preferred supermarket and start your routine. You take all fresh goods, and then head to the canned ones. Your children love canned chicken soup; you know it’s not as healthy as the home-made version, but you use it as a prize for good behavior.

It’s the day you go shopping (groceries), you go to your preferred supermarket and start your routine. You take all fresh goods, and then head to the canned ones. Your children love canned chicken soup; you know it’s not as healthy as the home-made version, but you use it as a prize for good behavior.They take a two-week supply and gave it for you to put all the cans in the shopping cart. You, as a responsible parent, inspect the cans one by one, looking for defects and the most important fact: the expiration date. You realize that all cans have the same taste, but they are from different brands. Some already had expired (last year!), some will expire in about a month, and finally you see a group of units with an expiration date about one year in the future. As everyone expects, you pick the last group (is always good to have some canned food if a zombie apocalypse occurs).

Now imagine, just for a moment, that instead of canned chicken soup, you went to shop canned software. And that software will be used to run your business, your brand new car, your home fire detection system, or something as delicate as a life support medical machine.

Continuing with this history, imagine that instead of an expiration date the software cans are labeled with two important information: what they do (functionalities) or ingredients if they were chicken soup; and what is the expected date when that software will no longer be maintained by its creators (expiration date). That last piece of information talks about the internal quality of the software. It says to you: How easy can this software be extended or fixed. Going back to the chicken soup if we have:

|

| Rotten Software Can |

- A can of software (soup) with a past Expiration Date: that means that is not possible for the team working behind that software to fix, add, or change features because the product is a total mess (internally).

- A can of software (soup) with an Expiration Date in the near future: this is a software system (project) that is already dying. Its internal quality is reaching the no return point. In a matter of month this system will no longer be maintainable by its team.

- A can of software (soup) with an Expiration Date so distant that nobody can really say when it will happen: this system is the most reliable, extensible, less risky one.

|

| Fresh Software Can |

The second quality feature (expiration date) is what is commonly called the Maintainability Index.

So, please, the next time you need to buy a software system, check its expiration date, you don’t want to poison your business, home, car, or children.

Maintainability Index: opinions and usefulness

The usage of this metric is not universally accepted in the software industry. For example checkout (van Deursen 2014) Think Twice Before Using the "Maintainability Index", where the author exposes why he is not quite sure if the metric should be used.

The main concern is about the reference data (base-line) used to measure software systems. The maintainability index can be considered a relative metric. Is a common practice for software laboratories to test systems (for its internal quality properties), and use that data so build a ranking. If the baseline does not represent the universe of software products relevant for the subject of the analysis the output is not very useful.

Thankfully, labs like the Software Improvement Group (SGI) calibrates their base data yearly.

Wednesday, May 18, 2016

Crazy Ideas

Imagine that the Gods of Code gave you a magic wand*. This impressive tool allows you to instantly analyze a code base and give a score from 0 to 10 about its maintainability; where zero (0) means impossible to change, and then (10) really easy to change.

Imagine that the Gods of Code gave you a magic wand*. This impressive tool allows you to instantly analyze a code base and give a score from 0 to 10 about its maintainability; where zero (0) means impossible to change, and then (10) really easy to change.Suddenly, you receive three consulting proposals from distinct clients (A, B, and C). They are requesting you to implement some new features in their flag-ship product. Using your magic wand, you determine that:

- Product A has a maintainability index of 6,

- Product B has one of 1.5, and

- Product C has a maintainability index of 9

Your potential clients have stated that their product is mission critical and that the impact of introducing new defects (bugs) to that product can be catastrophic. Because of that they will transfer that risk to you: any new bug found during a two-month certification period need to be fixed ASAP and without any additional payment.

Assuming a pricing strategy X (time based, effort based, etc.), can we

- Charge Client C the regular rate?

- Charge Client A the regular rate adjusted (multiplied) by some risk based factor (inversely proportional to the maintainability index)?

- Just drop client B and avoid that risk?

Do you get where I’m going?

- Can we educate our customers by using a pricing strategy that directly reflects the level of risk and effort we need to put in order to work with their messy piece of code?

- With time, and some hard experiences, will our customer be more vigilant about the quality of their code base, and maybe start demanding more quality for all developers, inside or outside their organization?

* Don’t wait for the Gods, you can use SonarQube, PMD, ReSharper, Checkstyle, and many others.

Labels:

code-quality,

effort,

en_us,

maintainability,

maintainability-index,

pricing-strategy,

risk

Sunday, January 31, 2016

SOLID Principles summary for CodeCampSDQ 5.0 attendees

This is a summary of the SOLID principles to be used as an index by the CodeCampSDQ 5.0 (2016) attendees.

The initials stand for:

Are you lost?

If you don't know what are the SOLID principles, or what is CodeCampSDQ please read the following two sections. If you were an attendee to the event and you are looking for the talk material jump to What is SOLID?

What is CodeCampSDQ?

In summary (checkout the official site), CodeCampSDQ is

Just a group of "idealistic dreamers" who think they can change the world, a CodeCampSDQ at once.With the following mission (as of this writting)

The technical conference CodeCampSDQ tries to educate the community of professional Information Technology (IT) of the Dominican Republic, fostering a spirit of collaboration and sharing of knowledge for our attendees.The conference official site is http://codecampsdq.com/ and Facebook page is https://www.facebook.com/CodeCampSDQ

Is the 5.0 (2016) a product version?

No, this means that is the event number five and happens on 2016.What is SOLID?

Is a mnemonic acronym for five basic principles of object-oriented (OO) programming and design. The principles were stated by Uncle Bob (Robert C. Martin) in the early 2000s.The initials stand for:

- S: Single responsibility principle (SRP)

- O: Open/closed principle (OCP)

- L: Liskov substitution principle (LSP)

- I: Interface segregation principle (ISP)

- D: Dependency inversion principle (DIP)

Extended version of each principle

I've done a summary of each principle presented in a problem-solution way. Each post has the following basic structure:- Brief description of the principle

- A sample code not honoring the principle aka the Dirty version

- A rationalization about why the code is violating the principle, along with the proposed solution

- The solution presented as the Clean version, and

- Finally, and explanation about what is the relation of the principle with Agile Software Development

This is the list of posts:

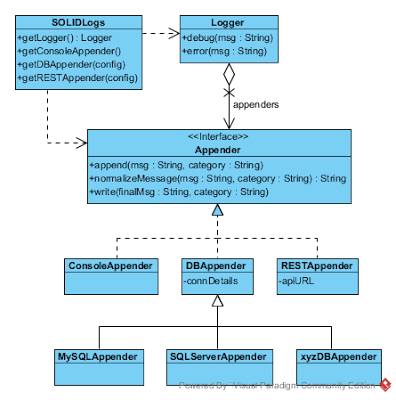

Some of them have small UML diagrams to help express the design.

Where is the code?

All code samples used on the four posts are in a single GitHub repo lsolano/blog.solid.demo

Labels:

agile,

CodeCampSDQ,

en_us,

oo design,

public talk,

SOLID,

solid principles

Monday, January 18, 2016

SOLID Principles: ISP (4 of 4)

If you missed the previous post from this series check it out SOLID Principles: LSP (3 of 4).

Interface segregation principle: ISP

The principle states that

"No client should be forced to depend on methods it does not use."

In other words what the principle says is that components should be discrete: if you ask someone its name you don't want to know that he is divorced four times and likes Italian food.

Imaging you are working with an input mapped to a Camera, the stream of data coming from that source is Read Only, and Non Seekable. You have no way to "write" to the camera feed, or to seek the camera to a specific time; you only get to read or loose the data. But because you are using a general purpose IO framework you end up using an InputSream with reset() and mark() / seek() methods.

What the ISP says for cases like this, is to split the interface into as many smaller ones as needed by the clients. The division is done looking at the "roles" the initial interface must play. In our case the InputStream is playing a both the Read Only and Non Seekable roles. The first one is implicit by the name prefix "Input", so we don't need to create another one to indicate that is an Read Only stream. But for the second role we need a way to say that our stream does not allows seek operations. So we could split the InputStream in two components: InputStream, and SeekableStream. The first one stays as is, with the seek-related methods removed. The second one will only have the seek-only methods on it.

Imaging you are working with an input mapped to a Camera, the stream of data coming from that source is Read Only, and Non Seekable. You have no way to "write" to the camera feed, or to seek the camera to a specific time; you only get to read or loose the data. But because you are using a general purpose IO framework you end up using an InputSream with reset() and mark() / seek() methods.

What the ISP says for cases like this, is to split the interface into as many smaller ones as needed by the clients. The division is done looking at the "roles" the initial interface must play. In our case the InputStream is playing a both the Read Only and Non Seekable roles. The first one is implicit by the name prefix "Input", so we don't need to create another one to indicate that is an Read Only stream. But for the second role we need a way to say that our stream does not allows seek operations. So we could split the InputStream in two components: InputStream, and SeekableStream. The first one stays as is, with the seek-related methods removed. The second one will only have the seek-only methods on it.

Related Concepts

In the previous example we have an, initial, InputStream playing to many roles: usually those interfaces are called "Fat Interfaces".

After doing the cleaning process, we end up with two more specific interfaces one to only read and another allowing us to seek, those interfaces are called "Slim Interfaces", or "Role Interfaces", meaning they are describing a single role.

Of course, that does not means that we are unable to implement both interfaces in a single concrete component. But for clients expecting just one of the roles we can declare that the given component fulfills that role, and no more, by casting the concrete implementation with the needed role interface.

Something like this:

1 2 3 4 5 | CameraOrMediaInputStream camInput = new CameraOrMediaInputStream(...); CameraReader camReader = new CameraReader((InputStream)camInput); CameraOrMediaInputStream mediaInput = new CameraOrMediaInputStream(...); MediaReader mediaReader = new MediaReader((SeekableStream)mediaInput); |

Casts are redundant in many OO languages but were added to imply the signature of both constructors expecting the steams.

Be aware that "Role Interfaces", does not mean "Single Method Interfaces", a role may be fulfilled by a single method, or by sequence of calls to related methods.

Code sample

Code samples are on github repo lsolano/blog.solid.demoThe code was written using Scala 2.11.7 using the JVM 1.8.0u20. The example for this principle is an Automatic Teller Machine (ATM). Our ATM is located in an international airport so it must handle withdraws with currency conversions. The ATM also supports deposits using both cash and cheques.

Here you have a summary of the use cases:

|

| Fat Interface |

Here is the code for the "fat" ATMInteractor component:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 | class ATMInteractor(securityService: SecurityService, withdrawalService: WithdrawalService, depositService: AnyRef, currencyRatesService: AnyRef) { require(securityService != null) require(withdrawalService != null) require(depositService != null) require(currencyRatesService != null) def validate(request: CustomerValidationRequest): CustomerValidationResponse = { val validation = securityService.validateCustomer(PlasticInfo(request.secret, request.pin)) CustomerValidationResponse(validation.valid) } def withdrawal(request: WithdrawalRequest): TransactionResponse = { val response = withdrawalService.withdrawal(com.malpeza.solid.isp.model.Withdrawal(request.pin, request.amount)) val failReason = response.reason match { case Some(r) => r match { case com.malpeza.solid.isp.model.InsufficientBalance => InsufficientBalance case _ => CallBank } case _ => CallBank } TransactionResponse(response.done, Option(failReason)) } def deposit(request: AnyRef) = ??? } object ATMInteractor { def apply(securityService: SecurityService, withdrawalService: WithdrawalService, depositService: AnyRef, currencyRatesService: AnyRef): ATMInteractor = { new ATMInteractor(securityService, withdrawalService, depositService, currencyRatesService) } } |

What is wrong with this code: ISP?

Simply put, this component is doing to much. It handles all use cases: Customer Validation, Withdrawal, and Deposit. Because of that, it has to many dependencies (services).If you are validating a customer you don't need to know anything about Deposits or Withdrawals.

Cleaning the code

Honoring the ISP

We need to split all these responsibilities and create specialized components able to handle each interaction (transaction). So we'll end up with three components named CustomerValidation, Withdrawal, and Deposit; each one representing a use case. To keep this post short, the Deposit and Withdrawal with currency conversions scenarios were left out.

This is the revised class diagram showing only the important components:

|

| Clean Interfaces |

CustomerValidation code:

1 2 3 4 5 6 7 8 9 10 11 12 13 | class CustomerValidation(securityService: SecurityService) { require(securityService != null) def validate(request: CustomerValidationRequest): CustomerValidationResponse = { val validation = securityService.validateCustomer(PlasticInfo(request.secret, request.pin)) CustomerValidationResponse(validation.valid) } } object CustomerValidation { def apply(securityService: SecurityService) = new CustomerValidation(securityService) } |

Withdrawal code:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 | class Withdrawal(withdrawalService: WithdrawalService) { require (withdrawalService != null) def withdrawal(request: WithdrawalRequest): TransactionResponse = { val response = withdrawalService.withdrawal(com.malpeza.solid.isp.model.Withdrawal(request.pin, request.amount)) val failReason = response.reason match { case Some(r) => r match { case com.malpeza.solid.isp.model.InsufficientBalance => InsufficientBalance case _ => CallBank } case _ => CallBank } TransactionResponse(response.done, Option(failReason)) } } object Withdrawal { def apply(withdrawalService: WithdrawalService) = new Withdrawal(withdrawalService) } |

Agile Link

With the ISP we gain Orthogonality. For that concept I mean the following meanings

In other words, we get components with none or little relation. If one must change the other(s) remain untouched. This minimizes the impact of changes.

Orthogonality can be applied to architectural layers such as IO, Persistence, Transactions, Logging, etc.; or to behaviors like the sample project presented here. The last version has a clear separation of Use Cases. We could implement all those behaviors in a single component but that will make the code harder to maintain.

With such a reduced amount of use cases is difficult to see the need for this (over)engineering, but try to imagine the following:

You are working in an online retailing product's back-end API. It's the fifth year since the API initial version, and you have about 500 different use cases, each one with some pretty complicated scenarios such as:

How many times you think you would expend each time you need to add a new feature or change an existing one if all these cases are implemented into a single component, or distributed within a small group of components?

Next: none, this is the last one.

statistically independentor

non-overlapping, uncorrelated, or independent objects of some kind

In other words, we get components with none or little relation. If one must change the other(s) remain untouched. This minimizes the impact of changes.

Orthogonality can be applied to architectural layers such as IO, Persistence, Transactions, Logging, etc.; or to behaviors like the sample project presented here. The last version has a clear separation of Use Cases. We could implement all those behaviors in a single component but that will make the code harder to maintain.

With such a reduced amount of use cases is difficult to see the need for this (over)engineering, but try to imagine the following:

You are working in an online retailing product's back-end API. It's the fifth year since the API initial version, and you have about 500 different use cases, each one with some pretty complicated scenarios such as:

- Checkout with mixed payment methods: Coupons + Prepaid Card + Credit Card + PayPal, etc.

- Manage upper bounds for customers by category to protect then from errors or malicious actions

- Single purchase with multiple items with arbitrary groups allowing for shipment to different addresses

- Manufacturers promotions by random distribution for customers matching certain profiles

- ...

How many times you think you would expend each time you need to add a new feature or change an existing one if all these cases are implemented into a single component, or distributed within a small group of components?

Series links

Previous, SOLID Principles: LSP (3 of 4)Next: none, this is the last one.

Wednesday, January 6, 2016

SOLID Principles: LSP (3 of 4)

If you missed the previous post from this series check it out SOLID Principles: OCP (2 of 4).

BlackHoleAppender (aka AppenderBase)

ConsoleAppender

MySQLAppender

To see how the API is used take a look to the following files: /js/test/dirtyTest.js and /js/test/cleanTest.js.

In contrast, Console and MySQL appenders have a limit on the message length. So they indeed are tightening their base-type's contract . That means that when an Appender is expected, and we pass the Console or MySQL version parts of the message could me silently truncated. This is a direct violation to the LS principle. Sub-types of the base appender must accept all the same input range managed by the base-type.

As an invariant no sub-type of BlackHoleAppender should limit messages to a shorter length than its parent, in order to comply with the LSP. All these changes are captured in the following diagram:

The orange components (and notes), have all the relevant changes. Now lets see the base and console appeders:

BlackHoleAppender (cleaned):

ConsoleAppender (cleaned):

API usage example (for real usage see mocha tests):

With the LS principle we gain predictability. I like the following meaning found in the Oxford dictionary:

Simply put with LSP we avoid surprises (aka WTFs). We avoid wasting time chasing bugs from bad-behaving components. If we are designing some framework we can create tests to be run by people making extensions or sub-types of our base types, also for people implementing our contracts (pure abstract, interfaces, or just words on paper).

With components designed like this, we are able to improve our estimates for change requests. Our velocity does not bounce dramatically and we get a sense of confidence both internal (the team) and external (stakeholders). This benefits help to build a Long Term Team, reduce stress level and staff turnover rate.

Previous, SOLID Principles: OCP (2 of 4)

Next, SOLID Principles: ISP (4 of 4)

Liskov substitution principle: LSP

This principle states that:

If your father accepts Animals, you must accept also Animals, not just Cats.

Covariance of return types in the sub-type:

If your father returns Animals, you are safe to only return Cats, because all Cats are also Animals.

No new exceptions:

If your father throws an AnimalException you are free to throw AnimalTooLargeException but not a ChairException.

Preconditions cannot be strengthened in a sub-type:

Imagine that you have a VeterinaryClinic with an operation called sacrifice(pet: Animal), with a contract saying that for an animal to be sacrificed it must have a deadly disease (hasADeadlyDisease property returning true).

Later you derived a VainVeterinaryClinic with a new rule: you only sacrifice pretty and deadly ill animals. If they are ugly and have a deadly illness you let then suffer. Now this sub class has a more restrictive precondition (contract) in order to sacrifice animals. Where your code expect a Vet Clinic instance and you pass the Vain one you could receive an exception when trying to sacrifice an ugly deadly ill animal.

Postconditions cannot be weakened in a sub-type:

Continuing with this cruel example, if you have a post-condition for the sacrifice(pet: Animal) method indicating that after the method completes the animal must be dead (isAlive returning false), then a sub-type of the Vet Clinic class can not alter this behavior leaving animals alive after calling the sacrifice operation. This will be a more permissive (weak) contract than the one defined by the super-type.

Invariants of the super-type must be preserved in a sub-type:

If Animal class defines the name property as immutable, then Cats can not be renamed. For Animal instances the name is an invariant through the life-time of each object. So for Cats it must be also true.

History constraint:

Imagine an animal cage with two operations enter(a: Animal) and takeOut(): Animal; the case also have a read-only property called isEmpty: boolean. If you look back a sequence on calls to these methods (the history of an instance), you can easily predict the final value of isEmpty.

Then if you derive a CatCage from AnimalCage the former must ensure that the same relation (on the later) holds true. You cannot have a CatCage saying that is empty after an enter(a: Animal) call.

These rules are better explained using an strongly typed language such as Java and C#, you can check out the following test classes and their associated class under test: NonGenericPetCageTests.java, and GenericPetCageTests.java (on the java sub-directory).

"objects in a program should be replaceable with instances of their sub-types without altering the correctness of that program."This introduces us to the concept of Substitutability.

Substitutability is a principle in object-oriented programming. It states that, in a computer program, if S is a sub-type of T, then objects of type T may be replaced with objects of type S (i.e., objects of type S may substitute objects of type T) without altering any of the desirable properties of that program (correctness, task performed, etc.).Put it simple: If you say you are a Duck be a one but fully. LSP differs from Duck typing (0) in that the former is more restrictive than the later. Duck typing says that for an object to be used in a particular context it must have a certain list of methods and properties, leaving out the details about what happens when you use those methods and properties (the object's internals). So how restrictive are the LSP sub-typing rules? here is a summary of the rules:

- Requirements on signatures

- Contravariance (1) of method arguments in the sub-type.

- Covariance (1) of return types in the sub-type.

- No new exceptions should be thrown by methods of the sub-type, except where those exceptions are themselves sub-types of exceptions thrown by the methods of the super-type.

- Behavioral conditions

- Preconditions (2) cannot be strengthened in a sub-type.

- Postconditions (2) cannot be weakened in a sub-type.

- Invariants (2) of the super-type must be preserved in a sub-type.

- History constraint (the "history rule"): sub-types must not allow state changes that are impossible for its super-type, this is possible because sub-types may include new methods that can alter its state in ways not defined by the parent type. So the history that you get after calling a certain methods sequence must be the same for the super-type and the sub-type.

Principle requirements explained (top-down):

Contravariance of method arguments:If your father accepts Animals, you must accept also Animals, not just Cats.

Covariance of return types in the sub-type:

If your father returns Animals, you are safe to only return Cats, because all Cats are also Animals.

No new exceptions:

If your father throws an AnimalException you are free to throw AnimalTooLargeException but not a ChairException.

Preconditions cannot be strengthened in a sub-type:

Imagine that you have a VeterinaryClinic with an operation called sacrifice(pet: Animal), with a contract saying that for an animal to be sacrificed it must have a deadly disease (hasADeadlyDisease property returning true).

Later you derived a VainVeterinaryClinic with a new rule: you only sacrifice pretty and deadly ill animals. If they are ugly and have a deadly illness you let then suffer. Now this sub class has a more restrictive precondition (contract) in order to sacrifice animals. Where your code expect a Vet Clinic instance and you pass the Vain one you could receive an exception when trying to sacrifice an ugly deadly ill animal.

Postconditions cannot be weakened in a sub-type:

Continuing with this cruel example, if you have a post-condition for the sacrifice(pet: Animal) method indicating that after the method completes the animal must be dead (isAlive returning false), then a sub-type of the Vet Clinic class can not alter this behavior leaving animals alive after calling the sacrifice operation. This will be a more permissive (weak) contract than the one defined by the super-type.

Invariants of the super-type must be preserved in a sub-type:

If Animal class defines the name property as immutable, then Cats can not be renamed. For Animal instances the name is an invariant through the life-time of each object. So for Cats it must be also true.

History constraint:

Imagine an animal cage with two operations enter(a: Animal) and takeOut(): Animal; the case also have a read-only property called isEmpty: boolean. If you look back a sequence on calls to these methods (the history of an instance), you can easily predict the final value of isEmpty.

- enter(a) => takeOut() => enter(a): isEmpty is false

- enter(a) => takeOut() => enter(a) => takeOut(): isEmpty is true

Then if you derive a CatCage from AnimalCage the former must ensure that the same relation (on the later) holds true. You cannot have a CatCage saying that is empty after an enter(a: Animal) call.

These rules are better explained using an strongly typed language such as Java and C#, you can check out the following test classes and their associated class under test: NonGenericPetCageTests.java, and GenericPetCageTests.java (on the java sub-directory).

Side note: Who is Barbara Liskov?

Very, very short BioBarbara Liskov (born November 7, 1939 as Barbara Jane Huberman) is an American computer scientist who is an institute professor at the Massachusetts Institute of Technology (MIT) and Ford Professor of Engineering in its School of Engineering's electrical engineering and computer science department.Among her achievements we found that, with Jeannette Wing, she developed a particular definition of sub-typing, commonly known as the Liskov substitution principle (LSP). For more information check her bio at MIT web site. Why I'm writing about her?In our industry (software development), and in particular my country the Dominican Republic, we have a huge disparity between male and female personnel. I believe that this is holding us back because as with any aspect of the life, the diversity is good to avoid biases and narrow thinking. |

Code sample

Code samples are on github repo lsolano/blog.solid.demoThe code was written using JavaScript over Node.js v4.2.4. The sample API is about a logging library.We'll focus our attention to the Appenders cluster (family). The main components are:

- The API entry point called SOLIDLogs, from there get instances of loggers and appenders

- The Logger, with very simple implementation: supports only debug() and error() operations.

- The Appender interface (contract) and all implementations: Console, REST, DB, etc. Each appender is responsible for sending the messages from logger to its destination based on some configuration

All Level information was left out to keep the API as simple as possible. We are assuming that the level is ALL so always debug and error messages are sent to appenders.

This is the first version of the Appeder Base (BlackHoleAppender), and its derivatives ConsoleAppender and MySQLAppender.

BlackHoleAppender (aka AppenderBase)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 | function BlackHoleAppender(args) { this.name = (args.name || 'defaultAppender'); }; BlackHoleAppender.prototype.normalizeMessage = function(message, category) { return message; } BlackHoleAppender.prototype.append = function(message, category) { var finalMessage = this.normalizeMessage(message, category); this.write(finalMessage, category); }; BlackHoleAppender.prototype.write = function(finalMessage, category) { /* To be implemented by sub-classes */ }; module.exports = BlackHoleAppender; |

ConsoleAppender

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 | const maxMessageLength = Math.pow(2, 30); var AppenderBase = require('./BlackHoleAppender.js'); function ConsoleAppender(cons, maxLength) { this.console = (cons || console); this.maxLength = maxLength || 0; var finalArgs = ['ConsoleAppender']; Array.prototype.forEach.call(arguments, function(arg) { finalArgs.push(arg); }); AppenderBase.apply(this, Array.prototype.slice.call(finalArgs)); } ConsoleAppender.prototype = new AppenderBase('ConsoleAppender'); ConsoleAppender.prototype.write = function(finalMessage, category) { switch (category) { case "Error": this.console.error(finalMessage); break; default: this.console.debug(finalMessage); } }; ConsoleAppender.prototype.normalizeMessage = function(message, category) { var msg = (message || ''); var allowedLength = (this.maxLength || maxMessageLength); msg = msg.length > allowedLength? msg.substring(0, allowedLength) : msg; return msg; } module.exports = ConsoleAppender; |

MySQLAppender

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 | const maxMessageLength = Math.pow(2, 14); var AppenderBase = require('./BlackHoleAppender.js'); function MySQLAppender(rdbmsRepository, maxLength) { this.repository = rdbmsRepository; this.maxLength = maxLength || 0; var finalArgs = ['MySQLAppender']; Array.prototype.forEach.call(arguments, function(arg) { finalArgs.push(arg); }); AppenderBase.apply(this, Array.prototype.slice.call(finalArgs)); } MySQLAppender.prototype = new AppenderBase('MySQLAppender'); MySQLAppender.prototype.write = function(finalMessage, category) { switch (category) { case "Error": this.repository.persist( { text: finalMessage, category: category }); break; default: this.repository.persist( { text: finalMessage, category: "Debug" }); } }; MySQLAppender.prototype.normalizeMessage = function(message, category) { var msg = (message || ''); var allowedLength = (this.maxLength || maxMessageLength); msg = msg.length > allowedLength? msg.substring(0, allowedLength) : msg; return msg; } module.exports = MySQLAppender; |

To see how the API is used take a look to the following files: /js/test/dirtyTest.js and /js/test/cleanTest.js.

What is wrong with this code: LSP?

Here we have one base class and two derivatives. The base does nothing with the passed message on the normalization step, it just returns the same thing. So its contract is "allow all messages".In contrast, Console and MySQL appenders have a limit on the message length. So they indeed are tightening their base-type's contract . That means that when an Appender is expected, and we pass the Console or MySQL version parts of the message could me silently truncated. This is a direct violation to the LS principle. Sub-types of the base appender must accept all the same input range managed by the base-type.

Cleaning the code

Honoring the LSP

By the time of this writing (2016) we have the following limitations in the length of an string when targeting the following platforms / products:

- UTF-8 is a "variable-length" encoding raging from 1 to 4 bytes per character, it can encode all UNICODE characters, so we must assume that UTF-8 will be used to store the message sent to the appenders.

- The worst case scenario with UTF-8 is that all characters in a string use 4 bytes so we must divide the total bytes capacity of the storage media by 4 to know the safe possible maximum length.

- JavaScript implementations can handle from 2^20 to 2^32 bytes per string. If we divide 2^32 by 4 we get 2^30, so for the ConsoleAppender the max allowed message length will be 2^30.

- MySQL (5.x) has a limit for string (varchar) columns of 2^16, again divided by 4 yields 2^14

- SQL-Server has a 2^30 bytes limit, divided by 4 gives us 2^28

With that information, and knowing that in order to honor the LSP the sub-types of BlackHoleAppender must allow at least the same message size as the super-type, we must force the base appender to handle the minimum possible message size, that is 2^14 from the MySQL implementation.

In order to do that we should "declare" the fact that know the appenders handle an explicit message max size. Also we must decide what to do when the message is longer than expected. To solve this we'll introduce the max length limit as the property maxLength, and the behavior when exceeded as an enumeration with only two possible values: Truncate (default), and Ignore.

As an invariant no sub-type of BlackHoleAppender should limit messages to a shorter length than its parent, in order to comply with the LSP. All these changes are captured in the following diagram:

|

| Clean logging API |

BlackHoleAppender (cleaned):

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 | var msgHandling = require('../messageHandling'); function BlackHoleAppender(name, config) { this.name = (name || 'blackHole'); this.maxLength = (!!config.baseMaxLength? config.baseMaxLength : 0); this._messageHandling = msgHandling.truncate; }; Object.defineProperty(BlackHoleAppender.prototype, 'messageHandling', { get: function() { return this._messageHandling; }, set: function(messageHandling) { return this._messageHandling = messageHandling; } }); BlackHoleAppender.prototype.normalizeMessage = function(message, category) { var msg = (message || ''); if (msg.length > this.maxLength) { if (this.messageHandling === msgHandling.truncate) { msg = msg.substring(0, this.maxLength); } else { msg = null; } } return msg; } BlackHoleAppender.prototype.append = function(message, category) { var finalMessage = this.normalizeMessage(message, category); if (!!finalMessage) { this.write(finalMessage, category); } }; BlackHoleAppender.prototype.write = function(finalMessage, category) { /* To be implemented by sub-classes */ }; module.exports = BlackHoleAppender; |

ConsoleAppender (cleaned):

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 | var AppenderBase = require('./BlackHoleAppender.js'); function ConsoleAppender(cons, config) { var finalArgs = ['console', config]; Array.prototype.forEach.call(arguments, function(arg) { finalArgs.push(arg); }); AppenderBase.apply(this, Array.prototype.slice.call(finalArgs, 2)); this.maxLength = Math.max(config.consoleMaxLength, this.maxLength); this.console = (cons || console); } ConsoleAppender.prototype = new AppenderBase('console', {}); ConsoleAppender.prototype.write = function(finalMessage, category) { switch (category) { case "Error": this.console.error(finalMessage); break; default: this.console.debug(finalMessage); } }; module.exports = ConsoleAppender; |

API usage example (for real usage see mocha tests):

1 2 3 4 5 6 7 8 9 10 11 12 13 14 | /* Optional: Appenders configuration override */ var configOverride = null, /* Boundary interface: How to "talk" with mySQL */ repository = new DBMSXYZRepo(), /* The logger */ logger = SOLIDLogs.getLogger(), /* Some appender */ appender = SOLIDLogs.getMySQLAppender(repository, configOverride); appender.messageHandling = msgHandling.ignore; /* default is msgHandling.truncate */ logger.addAppender(appender); logger.debug('Hello World!'); logger.error('The World Is On Fire!!!'); |

Agile Link

With the LS principle we gain predictability. I like the following meaning found in the Oxford dictionary:The fact of always behaving or occurring in the way expected.

Simply put with LSP we avoid surprises (aka WTFs). We avoid wasting time chasing bugs from bad-behaving components. If we are designing some framework we can create tests to be run by people making extensions or sub-types of our base types, also for people implementing our contracts (pure abstract, interfaces, or just words on paper).

With components designed like this, we are able to improve our estimates for change requests. Our velocity does not bounce dramatically and we get a sense of confidence both internal (the team) and external (stakeholders). This benefits help to build a Long Term Team, reduce stress level and staff turnover rate.

Series links

Previous, SOLID Principles: OCP (2 of 4)Next, SOLID Principles: ISP (4 of 4)

Labels:

agile,

better estimates,

developer stress,

en_us,

javascript,

js,

Liskov substitution principle,

mocha,

node.js,

oo design,

predictability,

solid principles,

staff turnover

Subscribe to:

Posts (Atom)